Promptable image segmentation with Meta AI's Segment Anything Model

How to cut out any object in any image with a single click. Also in this edition: How to chain multiple class methods in Python

Happy Wednesday everyone and welcome to your midweek dose of technology, data science, and AI!

Before we dive in, let’s take a moment to appreciate the enduring impact of Moore’s Law on the tech industry. On this day in 1965, Electronics Magazine published an article by Gordon Moore on the future of semiconductor components, in which he observed that the number of transistors on an integrated circuit has doubled approximately every two years and predicted that this trend will likely continue. Five years later, in 1970, the press coined the term we all know today: Moore’s Law. Moore’s prediction inspired chipmakers to invest in R&D to create smaller and more powerful microchips, leading to the development of the personal computer and other consumer electronics that we’re now glued to all day.

This edition contains:

✂️ Meta’s Segment Anything Model (SAM)

🔗 Method chaining in Python

Image segmentation with Meta’s SAM

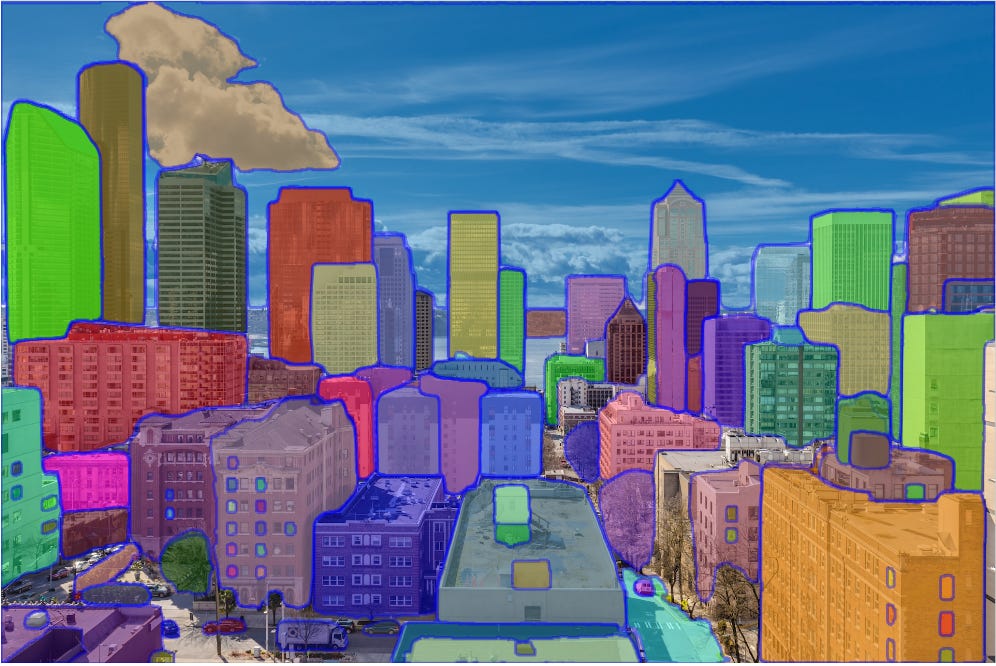

Earlier this month, Meta AI released a promptable foundation model for image segmentation called Segment Anything Model, or SAM for short. The model’s promptable interface provides it with flexibility and enables it to be used in a variety of segmentation tasks by simply engineering the right prompt (clicks, boxes, etc.). For instance, the user can interactively click on or draw bounding boxes around objects in order to prompt the model to include or exclude these areas in the segmentation process.

The inspiration for this approach - perhaps not surprisingly - was taken from natural language processing, where, through prompting, foundation models such as GPT4 are capable of performing zero-shot and few-shot learning for new datasets and tasks.

Zero-shot learning refers to the ability of the model to recognize new classes that were not part of its training set, whereas few-shot learning refers to the ability to learn to recognize new classes from only a few examples.

Another factor that motivated this approach was the lack of readily available, annotated training data for image segmentation tasks. In a cyclic way, annotators leveraged SAM to interactively annotate images, which, in turn, were then used to update SAM itself. This cycle was repeated multiple times in order to improve both the model and the dataset. In addition, to increase the diversity of the segmentation masks, the authors used a combination of fully automatic annotation and assisted annotation processes.

In computer vision, a segmentation mask is a binary image that marks the pixels in an image according to which object or area they belong to.

Lastly, for scaling purposes, fully automated mask creation was used to obtain the final dataset, called SA-1B, that includes a remarkable 1.1 billion segmentation masks collected on ~11 million images - by far the the largest segmentation dataset to date.

How it works:

SAM was trained to return a valid segmentation mask for any prompt that provides information on what to segment in an image. A valid mask, in this context, refers to the model’s ability to deal with ambiguous prompts (“for example, a point on a shirt may indicate either the shirt or the person wearing it“). In this situation, a valid mask would be one that segments one of these objects. This task is used for pretraining and solving downstream segmentation tasks via prompting.

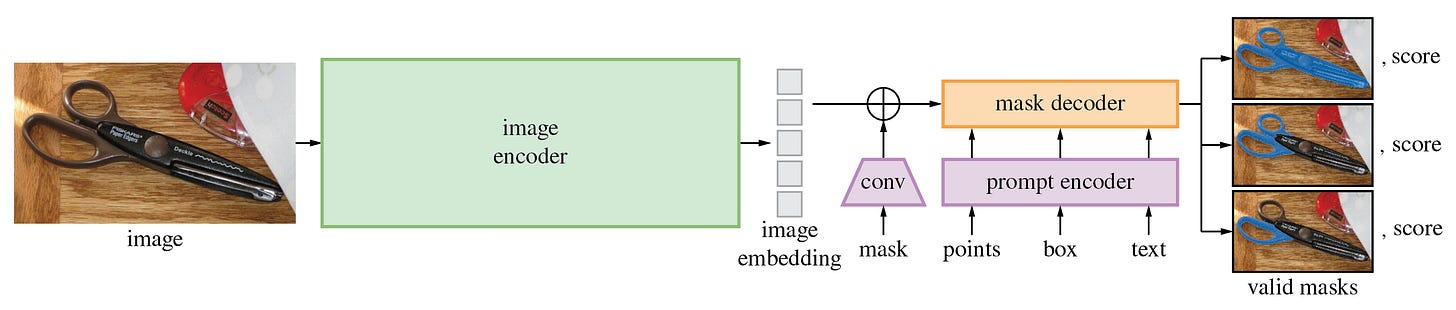

When the model is given an input image, a corresponding one-time embedding is produced by an image encoder. A lightweight encoder is used to convert any prompt, in real-time, into an embedding vector. The final segmentation masks are then predicted through the combination of these two sources by a lightweight decoder.

An encoder is a neural network that converts an input (e.g. an image) into a latent representation or a set of features that capture the essence of the input in a lower-dimensional space. By contrast, a decoder is a neural network that takes the latent representation generated by the encoder and produces an output (e.g. a reconstructed image) from it.

While the initial computation of the image embedding takes a few seconds, the model is able to produce a segment in just 50 milliseconds after given any prompt in a web browser.

For a deeper dive into the technical details, check out Meta AI’s research paper.

Future outlook:

In the future, the authors envision SAM to be used in a wide range of applications that require the segmentation of objects in images. Examples include the domain of AR/VR, where SAM could allow for the selection of an object based on a user’s gaze, before “lifting” it into 3D. Moreover, SAM could be used for research purposes and scientific studies, for instance by localizing objects or animals to study and track in video.

Haven’t tried it yet? Check out the demo and upload your own image to see how well it works!

Resources: Blog • Paper • Dataset • Demo

Postscript: In light of the emergence of these gargantuan foundation models, some containing hundreds of billions of parameters, we’re yet again reminded of the persisting impact of Moore’s Law - the prophecy that just keeps on giving, fueling the boundless growth of compute power that allows us to train these models in the first place. What better day to celebrate this impact than on its very anniversary? 🥂

Method chaining in Python

Have you ever found yourself working with large classes in Python? If so, then you’re probably no stranger to repeatedly calling that same class name over and over again, only to end up with code that’s difficult to read and maintain, like in the example below:

preprocess = Preprocessor()

data_clean = preprocess.remove_nan(data)

data_filtered = preprocess.filter(data_clean)

data_transformed = preprocess.transform(data_filtered)

data_preprocessed = data_transformed.dataThis is where method chaining comes in! Method chaining is a powerful technique that can help you write cleaner, more concise code, while also improving efficiency and performance.

Method Chaining is a technique used in object-oriented programming, where multiple methods are called in a single expression by chaining them together with the dot (.) operator.

By chaining together multiple method calls in a single expression, you can simplify your code and make it easier to understand. Take a look at the following:

data_preprocessed = (

Preprocessor(data)

.remove_nan()

.filter()

.transform()

.data

)Much cleaner! In addition, instead of having to input the data as an argument in each class method, the class object itself can be instantiated with the data, which is then used throughout the preprocessing steps.

To achieve this outcome in Python, we need to define a constructor that takes our data as an input argument, and modify the class methods to return self at the end of each method call. This allows us to call multiple methods on the same object instance in a single expression of code.

Here’s how this looks like:

class Preprocessor:

def __init__(self, data):

self.data = data

def remove_nan(self):

self.data.dropna(inplace=True)

return self

...And that’s it! For brevity reasons, I’m only showing one class method here, but the same applies to all others. We just need to make sure that each method returns self.

Note: If you’re interested in replicating this code - for the above example to work, make sure to use a Pandas DataFrame as an input argument to instantiate the class.

That’s all for today folks. Hope you enjoyed this edition of Tech Talk with Thomas - until next time!